A couple of years ago a paper titled Progressive Growing of GANs for Improved Quality, Stability, and Variation cropped up on my reading list. It describes growing generative adversarial networks progressively, starting with low-resolution images, and then building up more detail as training goes on. It got quite a bit of press at the time because the authors used their idea to generate realistic, unique images of human faces.

Looking at these images, it seems like the neural net would have to learn a vast number of things to be able to do what these networks were doing. Some of this seems relatively simple and factual - say, that eye colours should match. But other aspects are fantastically complex and hard to articulate. For instance, what nuances are needed to link the configuration of eyes, mouth and skin creases into a coherent facial expression? Of course, I'm anthropomorphising a statistical machine here, and we may be fooled by our intuition - it could turn out that there are relatively few working variations, and that the solution space is more constrained than we imagine. Maybe the most interesting thing is not the images themselves, but rather the uncanny effect they have on us.

Some time later, a favourite podcast of mine mentioned PhyloPic, a database of silhouette images of animals, plants and other lifeforms. Musing along the lines above, I wondered what would result if you trained a system like the one in the Progressive GANs paper on a very diverse dataset of this sort. Would you just generate many variations of a few known animal types, or would there be enough variation to do neural-network driven speculative zoology? However things played out, I was pretty sure I would get a few good prints for my study wall out of it, so I set out to satisfy my curiosity with an attitude of open-minded experimentation.

I adapted the code from the progressive GANs paper, and trained a model for 12000 iterations using a Google Cloud instance with 8 NVIDA K80 GPUs over the complete PhyloPic dataset. Total training time, including some false starts and experiments, was 4 days. I used the final trained model to produce 50k individual images, and then spent hours poring over the results, categorising, filtering and collating images. I also did some light editing by flipping images to orient creatures in the same direction, because I found this a bit more visually satisfying. This hands-on approach means that what you see below is a sort of collaboration between me and the neural net - it did the creative work, and I edited.

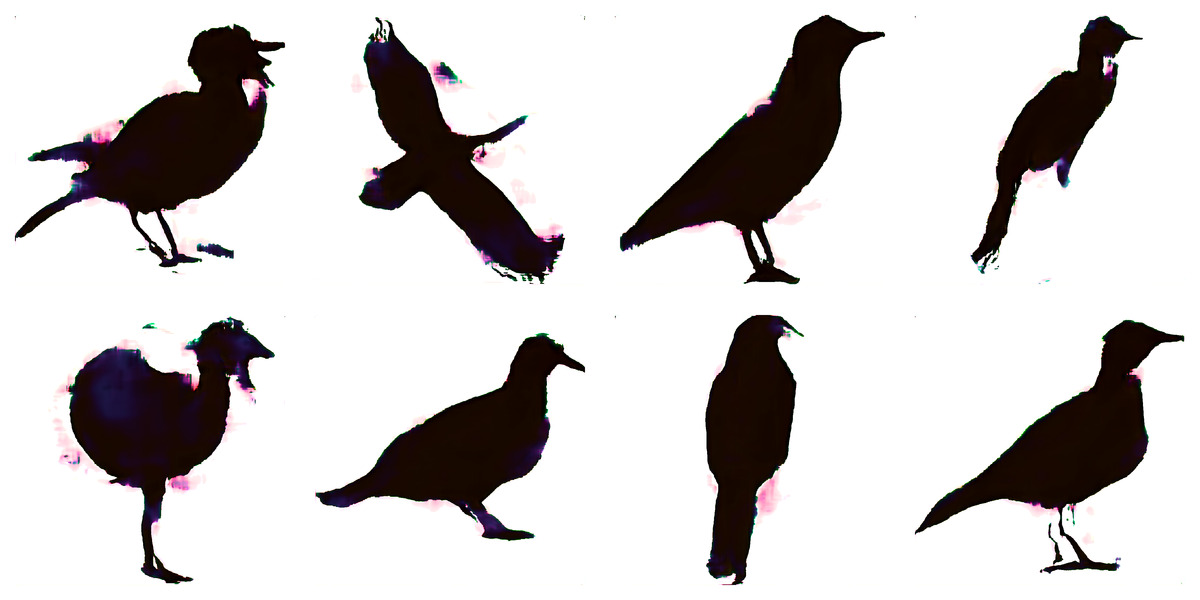

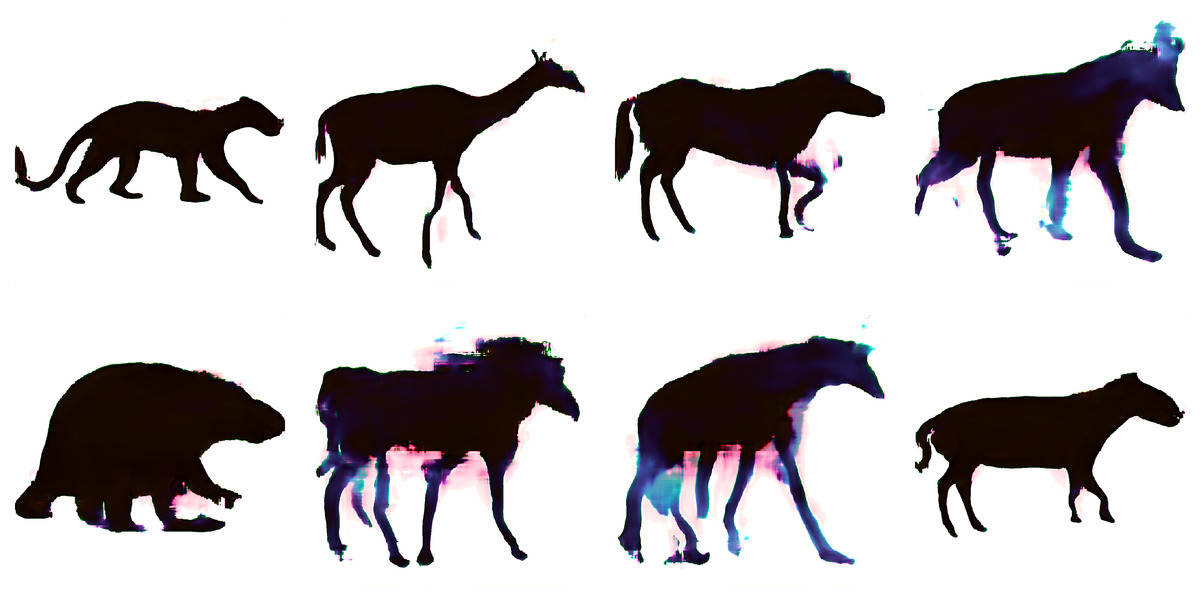

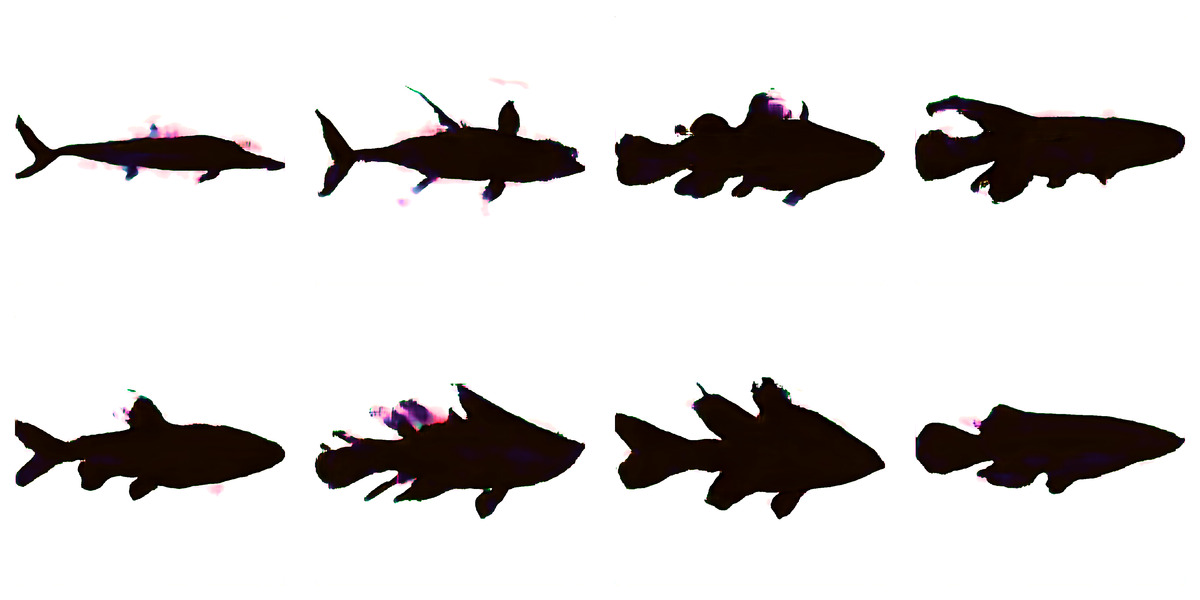

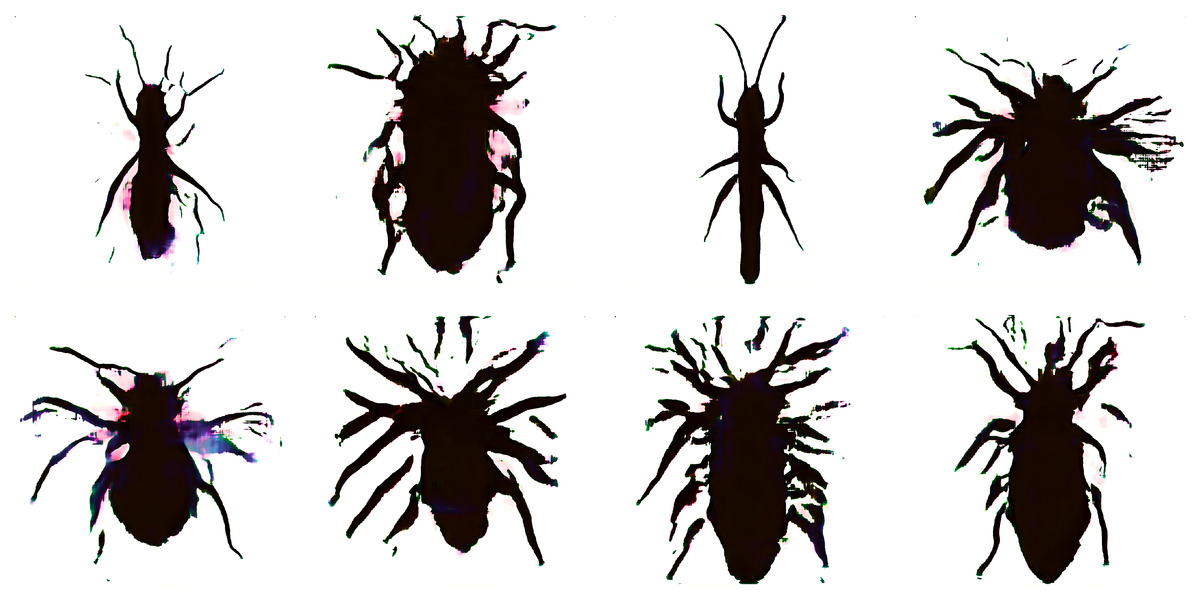

The first surprising thing to me was how aesthetically pleasing the results were. Much of this is certainly a reflection of the good taste of the artists who produced the original data. However, there were also some happy accidents. For instance, it seems that whenever the neural net enters uncertain territory - whether it be fiddly bits that it hasn't quite mastered yet or complete flights of vaguely biological fantasy - chromatic aberrations begin to enter the picture. This is curious, because the input set is entirely in black and white, so colour cannot be a learned solution to some generative problem. Any colour must necessarily be a pure artefact of the mind of the machine. Delightfully, one of the things that consistently triggers chromatic aberrations are the wings of flying insects. This means that it generated hundreds and hundreds of variations of evocatively-coloured "butterflies" like the ones above. I wonder if this could be a useful observation - if you train using only black-and-white images, but demand output in full colour, splotches of colour might be a useful way to see where the model is still not able to accurately represent the training set.

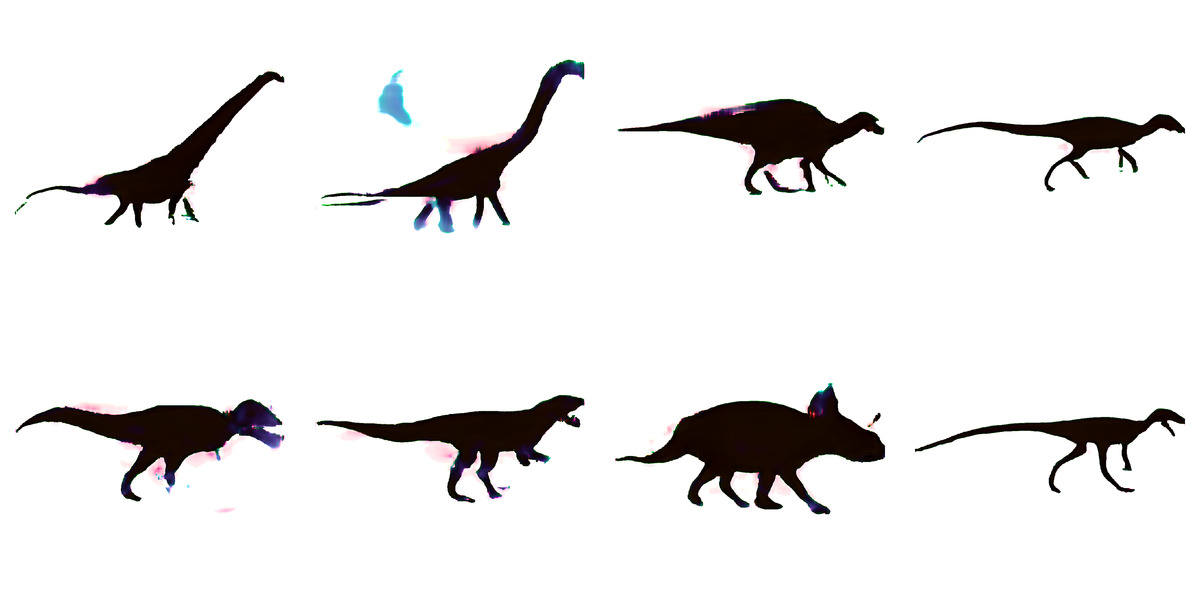

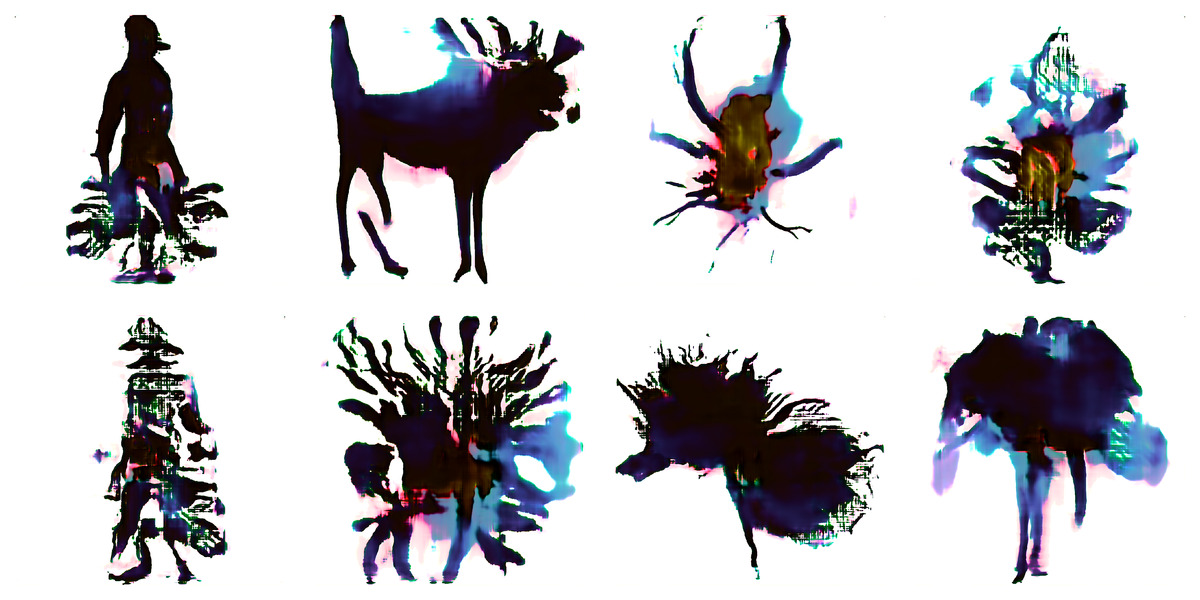

The bulk of the output is a huge variety of entirely recognisable silhouettes - birds, various quadrupeds, reams of little gracile theropod dinosaurs, sauropods, fish, bugs, arachnids and humanoids.

Stranger things

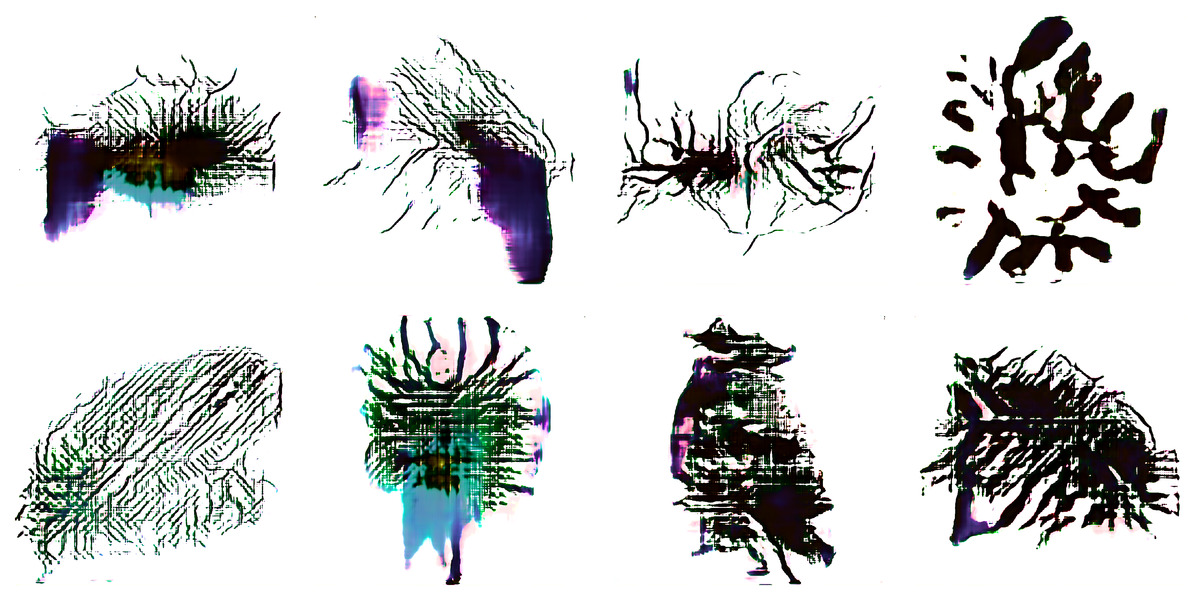

Once the known critters have been weeded out, we get to stranger things. One of the questions I had going into this was whether plausible animal body plans that don't exist in nature would emerge - perhaps hybrids of the creatures in the input set. Well, with careful search and a helpful touch of pareidolia, I found hundreds of quadrupedal birds, snake-headed deer and other fantastical monstrosities.

Straying even further into the unkown, the model produced weird abstract patterns and unidentifiable entities, all with a vaguely biological, "life-ish" feel to them.

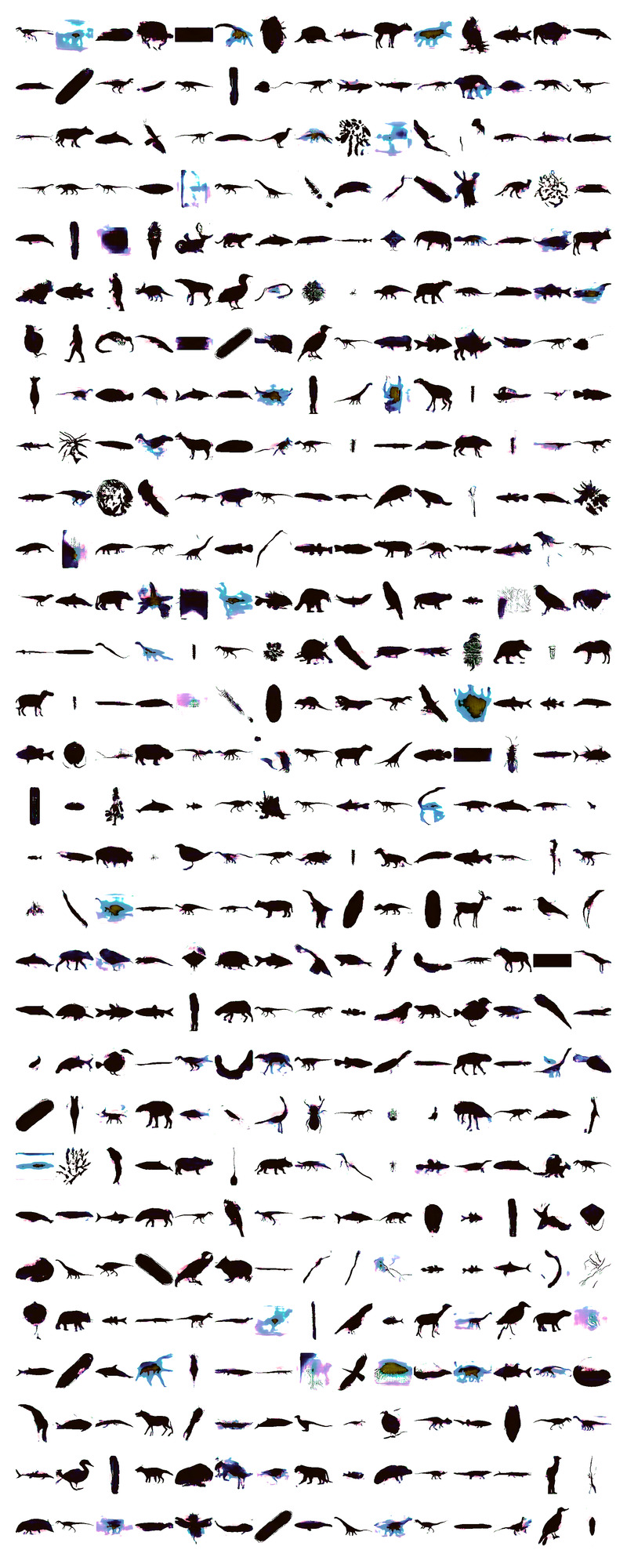

A random sample

What doesn't come through in the images above is the sheer abundance of variation in the results. I'm having a number of these image sets printed and framed, and the effect of hundreds of small, detailed images side by side at scale is quite striking. To give some idea of the scope of the full dataset, I'm including one of these prints below - this one is a random sample from the unfiltered corpus of images.